Abstract

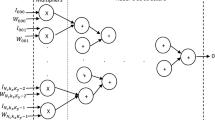

As the application scenarios of convolutional neural network (CNN) become more and more complex, the general CNN accelerator based on matrix multiplication has become a new research focus. The existing mapping methods for converting convolution calculation into matrix multiplication need to be improved. This paper proposes a new dynamic mapping model to improve the flexibility and versatility of matrix multiplication. The dynamic mapping model implements two algorithms: dynamic residue processing mapping algorithm (DRPMA) and dilated convolution mapping algorithm (DCMA). The former can dynamically adjust the mapping method according to the number of output channels of the convolution layer, improve the utilization of the multiply-accumulate (MAC) array. The latter extends the efficient support for Dilated CNNs. For demonstration, we implement an accelerator with Verilog on Xilinx VC709 FPGA board and test some typical CNN models. Experimental results show that the general accelerator achieves high performance and energy efficiency.

Supported by National Science and Technology Major Projects on Core Electronic Devices, High-End Generic Chips and Basic Software under grant No. 2018ZX01028101 and National Natural Science Foundation of China Key Program No. 61732018.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Abdel-Hamid, O., Mohamed, A.R., Jiang, H., Deng, L., Penn, G., Yu, D.: Convolutional neural networks for speech recognition. IEEE/ACM Trans. Audio Speech Lang. Process. 22(10), 1533–1545 (2014)

Azizimazreah, A., Chen, L.: Shortcut mining: exploiting cross-layer shortcut reuse in DCNN accelerators. In: 2019 IEEE International Symposium on High Performance Computer Architecture (HPCA), pp. 94–105. IEEE (2019)

Girshick, R., Donahue, J., Darrell, T., Malik, J.: Rich feature hierarchies for accurate object detection and semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 580–587 (2014)

Hinton, G., et al.: Deep neural networks for acoustic modeling in speech recognition: the shared views of four research groups. IEEE Signal Process. Mag. 29(6), 82–97 (2012)

Kim, D., Ahn, J., Yoo, S.: A novel zero weight/activation-aware hardware architecture of convolutional neural network. In: 2017 Design, Automation & Test in Europe Conference & Exhibition (DATE), pp. 1462–1467. IEEE (2017)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 1097–1105 (2012)

Lavin, A., Gray, S.: Fast algorithms for convolutional neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4013–4021 (2016)

Lin, S., et al.: FFT-based deep learning deployment in embedded systems. In: 2018 Design, Automation & Test in Europe Conference & Exhibition (DATE), pp. 1045–1050. IEEE (2018)

Liu, X., Kim, D.H., Wu, C., Chen, O.: Resource and data optimization for hardware implementation of deep neural networks targeting FPGA-based edge devices. In: 2018 ACM/IEEE International Workshop on System Level Interconnect Prediction (SLIP), pp. 1–8. IEEE (2018)

Liu, Z., Chow, P., Xu, J., Jiang, J., Dou, Y., Zhou, J.: A uniform architecture design for accelerating 2D and 3D CNNs on FPGAs. Electronics 8(1), 65 (2019)

Lu, L., Liang, Y.: SPWA: an efficient sparse winograd convolutional neural networks accelerator on FPGAs. In: 2018 55th ACM/ESDA/IEEE Design Automation Conference (DAC), pp. 1–6. IEEE (2018)

Ma, Y., Cao, Y., Vrudhula, S., Seo, J.S.: Optimizing loop operation and dataflow in FPGA acceleration of deep convolutional neural networks. In: Proceedings of the 2017 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 45–54 (2017)

Nair, V., Hinton, G.E.: 3D object recognition with deep belief nets. In: Advances in Neural Information Processing Systems, pp. 1339–1347 (2009)

Ramanishka, V., et al.: Multimodal video description. In: Proceedings of the 24th ACM International Conference on Multimedia, pp. 1092–1096 (2016)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014)

Suda, N., et al.: Throughput-optimized OpenCL-based FPGA accelerator for large-scale convolutional neural networks. In: Proceedings of the 2016 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 16–25 (2016)

Wu, D., Chen, J., Cao, W., Wang, L.: A novel low-communication energy-efficient reconfigurable CNN acceleration architecture. In: 2018 28th International Conference on Field Programmable Logic and Applications (FPL), pp. 64–643. IEEE (2018)

Wu, E., Zhang, X., Berman, D., Cho, I.: A high-throughput reconfigurable processing array for neural networks. In: 2017 27th International Conference on Field Programmable Logic and Applications (FPL), pp. 1–4. IEEE (2017)

Yang, K., Qiao, P., Li, D., Lv, S., Dou, Y.: Exploring temporal preservation networks for precise temporal action localization. arXiv preprint arXiv:1708.03280 (2017)

Zeng, H., Chen, R., Zhang, C., Prasanna, V.: A framework for generating high throughput CNN implementations on FPGAs. In: Proceedings of the 2018 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 117–126 (2018)

Zhang, C., Prasanna, V.: Frequency domain acceleration of convolutional neural networks on CPU-FPGA shared memory system. In: Proceedings of the 2017 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, pp. 35–44 (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 IFIP International Federation for Information Processing

About this paper

Cite this paper

Zhao, X., Jiang, J., Han, Z., Xu, J., Liu, Z. (2021). A Dynamic Mapping Model for General CNN Accelerator Based on FPGA. In: He, X., Shao, E., Tan, G. (eds) Network and Parallel Computing. NPC 2020. Lecture Notes in Computer Science(), vol 12639. Springer, Cham. https://doi.org/10.1007/978-3-030-79478-1_2

Download citation

DOI: https://doi.org/10.1007/978-3-030-79478-1_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-79477-4

Online ISBN: 978-3-030-79478-1

eBook Packages: Computer ScienceComputer Science (R0)