Abstract

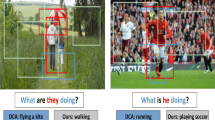

Visual Question Answering (VQA) is a joint task that aims to answer questions based on the given images. The correct analysis of multiple album aggregate issues to remain a key issue in the VQA case, especially when answering question from multiple albums, how to correctly understand album images and corresponding question is an urgent problem. Under the influence of multiple photo albums and the presence of scene words in the question, it may lead to understanding the wrong scene and outputting the wrong answer, resulting in a decrease in VQA performance. In order to solve this problem, this paper proposes a new image and sentence similarity matching model, which outputs the correct image representation by learning the semantic concept. Due to the scene word is not an entity, sometimes the information which the model extracted may be incorrect. Therefore, we can try to reanalyse the question in another different way and give the answer by the similarity between the question and the visual-text. Our model was tested on the MemexQA dataset. The experimental results show that our model not only produces meaningful text sentences to prove the correctness of the answer, but also improves the accuracy by nearly 10%.

The first author of this paper is a student. This work was supported by the National Natural Science Foundation of China (No. 61373104, No. 61405143); the Excellent Science and Technology Enterprise Specialist Project of Tianjin (No. 18JCTPJC59000) and the Tianjin Natural Science Foundation (No. 16JCYBJC42300).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Agrawal, A., et al.: VQA: visual question answering. Int. J. Comput. Vis. 123(1), 4–31 (2017)

Andreas, J., Rohrbach, M., Darrell, T., Klein, D.: Neural module networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 39–48 (2016)

Bjerva, J., Bos, J., Van der Goot, R., Nissim, M.: The meaning factory: formal semantics for recognizing textual entailment and determining semantic similarity. In: Proceedings of the 8th International Workshop on Semantic Evaluation (SemEval 2014), pp. 642–646 (2014)

Fukui, A., Park, D.H., Yang, D., Rohrbach, A., Darrell, T., Rohrbach, M.: Multimodal compact bilinear pooling for visual question answering and visual grounding. arXiv preprint arXiv:1606.01847 (2016)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Hu, R., Andreas, J., Rohrbach, M., Darrell, T., Saenko, K.: Learning to reason: end-to-end module networks for visual question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 804–813 (2017)

Ilievski, I., Yan, S., Feng, J.: A focused dynamic attention model for visual question answering. arXiv preprint arXiv:1604.01485 (2016)

Jiang, L., Liang, J., Cao, L., Kalantidis, Y., Farfade, S., Hauptmann, A.: MemexQA: visual memex question answering. arXiv preprint arXiv:1708.01336 (2017)

Kim, J.H., On, K.W., Lim, W., Kim, J., Ha, J.W., Zhang, B.T.: Hadamard product for low-rank bilinear pooling. arXiv preprint arXiv:1610.04325 (2016)

Kiros, R., et al.: Skip-thought vectors. In: Advances in Neural Information Processing Systems, pp. 3294–3302 (2015)

Kumar, A., et al.: Ask me anything: dynamic memory networks for natural language processing. In: International Conference on Machine Learning, pp. 1378–1387 (2016)

Liang, J., Jiang, L., Cao, L., Li, L.J., Hauptmann, A.G.: Focal visual-text attention for visual question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6135–6143 (2018)

Lu, J., Yang, J., Batra, D., Parikh, D.: Hierarchical question-image co-attention for visual question answering. In: Advances In Neural Information Processing Systems, pp. 289–297 (2016)

Ma, C., et al.: Visual question answering with memory-augmented networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6975–6984 (2018)

Malinowski, M., Rohrbach, M., Fritz, M.: Ask your neurons: a neural-based approach to answering questions about images. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1–9 (2015)

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: Advances in Neural Information Processing Systems, pp. 3111–3119 (2013)

Mueller, J., Thyagarajan, A.: Siamese recurrent architectures for learning sentence similarity. In: Thirtieth AAAI Conference on Artificial Intelligence (2016)

Nguyen, D.K., Okatani, T.: Improved fusion of visual and language representations by dense symmetric co-attention for visual question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6087–6096 (2018)

Sagala, T.W., Wati, T., Budi, N.F.A., Hidayanto, A.N., et al.: Analysis and implementation measurement of semantic similarity using content management information on wordnet. In: 2018 International Conference on Advanced Computer Science and Information Systems (ICACSIS), pp. 337–342. IEEE (2018)

Schwartz, I., Schwing, A., Hazan, T.: High-order attention models for visual question answering. In: Advances in Neural Information Processing Systems, pp. 3664–3674 (2017)

Shih, K.J., Singh, S., Hoiem, D.: Where to look: focus regions for visual question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4613–4621 (2016)

Xiong, C., Merity, S., Socher, R.: Dynamic memory networks for visual and textual question answering. In: International Conference on Machine Learning, pp. 2397–2406 (2016)

Yang, Z., He, X., Gao, J., Deng, L., Smola, A.: Stacked attention networks for image question answering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 21–29 (2016)

Yoon, S., Kim, J.: Object-centric scene understanding for image memorability prediction. In: 2018 IEEE Conference on Multimedia Information Processing and Retrieval (MIPR), pp. 305–308. IEEE (2018)

Zhu, C., Zhao, Y., Huang, S., Tu, K., Ma, Y.: Structured attentions for visual question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1291–1300 (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Jiang, S. et al. (2019). Semantic Reanalysis of Scene Words in Visual Question Answering. In: Lin, Z., et al. Pattern Recognition and Computer Vision. PRCV 2019. Lecture Notes in Computer Science(), vol 11857. Springer, Cham. https://doi.org/10.1007/978-3-030-31654-9_40

Download citation

DOI: https://doi.org/10.1007/978-3-030-31654-9_40

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-31653-2

Online ISBN: 978-3-030-31654-9

eBook Packages: Computer ScienceComputer Science (R0)